- Premium Results

- Publish articles on SitePoint

- Daily curated jobs

- Learning Paths

- Discounts to dev tools

7 Day Free Trial. Cancel Anytime.

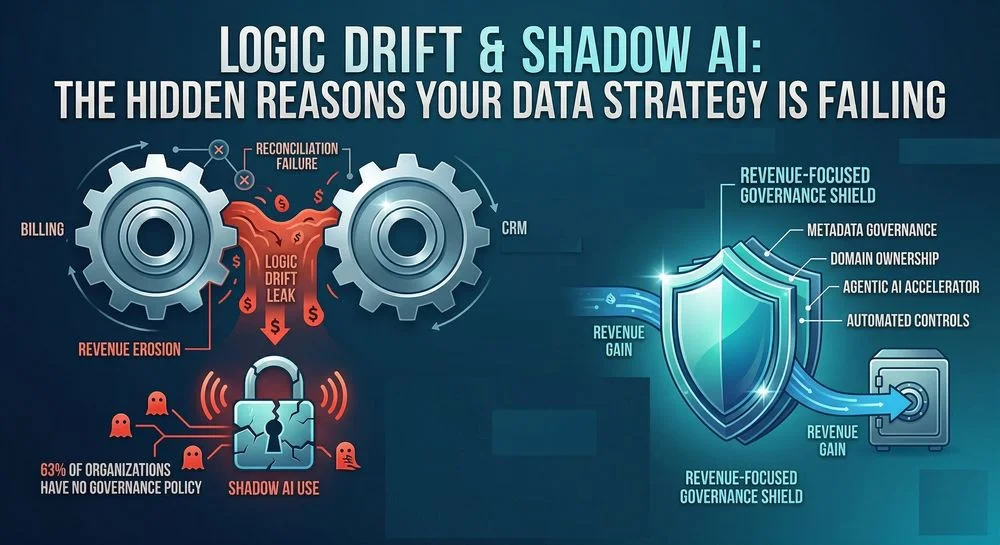

Logic Drift & Shadow AI: The Hidden Reasons Your Data Strategy Is Failing

Boardrooms often treat a data leak as a pure cybersecurity event: a hacker gets in, data gets out, and IT cleans up. But for developers and data teams, the failure often starts much earlier. Value leaks before data leaks publicly through broken reconciliation, drifting business logic, stale permissions, and employees copying sensitive records into unapproved AI tools. Calling all of this a security problem alone is a framing error. It is also a systems design problem.

Organizations need to recognize that data is both a business asset and an engineering responsibility. If the logic governing entitlements, billing, access, and reporting diverges across systems, the result can look like a breach even when no attacker is present. Likewise, if teams use AI tools outside approved controls, sensitive data can leave the system without anyone noticing until the damage is done. The conversation around data leaks needs an update: less boardroom abstraction, more attention to the technical patterns that create silent exposure.

The $100M+ Club Is (Unfortunately) Not Exclusive

The question for every CEO is no longer whether a breach will happen, but whether the organization’s architecture and operating discipline will allow it to survive one. Technical debt, weak controls, and unverified logic do not stay confined to engineering. They eventually show up in revenue, trust, and enterprise value.

Equifax remains one of the clearest reminders. In 2017, attackers exploited a known vulnerability after a protective software patch was not deployed, exposing the personal data of more than 147 million people. The incident became a case study in how an ordinary engineering failure, left unresolved, can turn into an extraordinary business disaster.

Equifax was not an outlier. T-Mobile, Marriott, and, more recently, Marks & Spencer each showed a different version of the same story: once controls fail, costs extend far beyond the first incident. The visible event may be a breach or ransomware attack, but the underlying issue is usually more mundane unpatched systems, weak visibility, fragmented ownership, or data moving through environments that no one is actively governing.

What Happens When the Leak Is Not a Hack?

Not every leak starts with an external attacker. Some of the most expensive failures begin inside the organization, and they do not always look dramatic in the moment. IBM’s recent reporting points to one fast-growing category: Shadow AI, where employees upload sensitive company or customer data into unauthorized AI tools outside approved security and governance controls.

From a developer perspective, Shadow AI is not just a policy violation. It is an uncontrolled data egress path. The problem is not that people use AI; the problem is that the data path bypasses logging, redaction, retention rules, access controls, and vendor review. Once that happens, teams lose the ability to answer basic technical questions: What data left? Who sent it? Was it masked? Was it retained? Can access be revoked?

Then there is logic drift: the slow divergence of business rules across systems that are assumed to agree. In one case I encountered, Salesforce did not reconcile cleanly against actual billing in SAP. Customer entitlements were being interpreted differently across regions and downstream processes. Nothing looked broken at a glance. There was no dramatic outage and no attacker in sight. But the mismatch silently affected the bottom line for multiple quarters, and by the time the damage was quantified, the loss exceeded $100 million.

That is why logic drift matters to developers. It usually begins as a perfectly ordinary implementation detail a formula rewritten in another service, a transformation adjusted during a backfill, a field reused with a slightly different meaning, or a dashboard metric that no longer matches the source system. Over time, these minor differences compound into reporting errors, entitlement mistakes, revenue leakage, and decisions made on corrupted assumptions.

How Developers Can Detect Logic Drift Earlier

The hard part about logic drift is that each system may look correct in isolation. The failure only becomes visible when outputs are compared across the workflow. That makes detection an engineering discipline, not a one-time audit. A practical starting point is to treat business logic as a shared contract rather than an implementation detail buried inside each application.

· Define a source of truth for critical calculations such as entitlement status, billable state, renewal dates, pricing logic, and revenue recognition inputs.

· Version business rules and data contracts so downstream services, data pipelines, and dashboards can be checked against the same definition rather than reinterpreting it locally.

· Run automated reconciliation jobs between operational systems and analytical systems. If Salesforce, SAP, the warehouse, and finance reports disagree beyond a threshold, alert on it like any other production issue. Example: A Basic SQL Reconciliation Check To operationalize this, you can run a periodic join across your ingested staging tables to flag status mismatches

-

MATCH: Join CRM_Entitlements and ERP_Billing on Global_Customer_ID.

-

VALIDATE: Compare logical status (e.g., is_active vs. is_paid).

-

ALERT: If values diverge, trigger a "Logic Drift" event for immediate remediation.

· Add contract tests around high-risk transformations. When schemas, formulas, or mappings change, validate expected outputs before data is promoted downstream.

· Keep auditable snapshots of critical records so teams can compare what a user should have had access to, what the billing system recorded, and what the analytics layer displayed at a given point in time.

This is where many data strategies fail. Teams monitor uptime, pipeline completion, and dashboard refreshes, yet they do not monitor whether the logic still means the same thing across systems. A green pipeline can still deliver the wrong answer.

How to Reduce Shadow AI Without Killing Productivity

Shadow AI is often a design failure as much as a governance failure. If the approved path is slower, harder, or less useful than pasting data into a public chatbot, users will route around policy. Developers, data teams, and product designers need to make the safe path the easiest path.

· Provide an approved AI access layer or gateway so prompts and uploads flow through logging, policy enforcement, redaction, and vendor-approved models.

Developer

↓

Approved Gateway (Redaction / Logging)

↓

Enterprise LLM

· Block or warn on sensitive fields such as account numbers, personal data, pricing terms, customer lists, or contract text before they leave controlled environments.

· Use role-based access, service accounts, and scoped tokens for AI agents exactly as you would for human users. An AI assistant should never inherit broad access simply because it is convenient.

· Separate experimentation from production. Developers can test models in sandboxes, but production data should require approved connectors, masking rules, and audit trails.

· Log prompts, tool calls, and downstream actions for internal AI systems so teams can investigate misuse, drift, or unexpected outputs after the fact.

The key mindset shift is simple: treat every AI-enabled workflow as another application surface. If a user interface, plugin, bot, or internal agent can read, transform, or export data, it deserves the same level of design scrutiny as any other production system.

The Hidden Cost of the Data Leak

Measuring a leak only by fines or headlines understates the damage. The real cost is layered and often delayed. Some of it is immediate—incident response, forensics, notification, and remediation. Some of it arrives later through litigation, customer churn, operational rework, downgraded trust, and years of defensive spending that slow product and platform progress.

Logic drift has a similar profile. The first signal may be small: a dashboard discrepancy, a support escalation, a finance exception, or a renewal dispute. But once teams have to unwind months of incorrect logic across applications, data pipelines, entitlements, and customer communication, the remediation cost multiplies. What looked like a reporting issue becomes a cross-functional repair program.

That is why the distinction between a breach and a data integrity failure matters less than people think. From an engineering standpoint, both are failures of control. In one case, the wrong party accessed the data. In the other, the system itself stopped preserving the intended meaning of the data. Either way, the business pays.

Reframing the Conversation for Revenue Protection

Terrifying as they seem, these failures are reducible with better technical discipline. Drawing on direct experience recovering more than $100 million in leaked revenue across multiple enterprises, here are five steps leaders and technical teams can act on without changing the core mission of the business.

· Treat critical business logic as production code. Assign owners, version it, document it, and test it whenever upstream or downstream systems change.

· Dismantle silos with shared controls. Security, data engineering, finance systems, and analytics teams should reconcile against the same governed definitions instead of maintaining parallel truths.

· Treat AI as an identity, not a feature. Every internal bot, assistant, or agent should have scoped permissions, auditable activity, and revocable access.

· Automate controls for Shadow AI. Policies matter, but enforcement matters more: approved tooling, redaction, egress controls, and monitoring should do the heavy lifting.

· Build resilience for both breaches and drift. Teams need runbooks not only for cyber incidents, but also for reconciliation failures, entitlement errors, bad model outputs, and downstream rollback or replay.

The boardroom that treats data governance as a compliance line item has already chosen its fate. Resilience is not built in the aftermath. It is forged in the quiet, unglamorous engineering decisions made long before the incident when strategy still has the luxury of time.